Most early-stage founders say they need "more distribution."

I ran a short distribution sprint for BeVisible to answer one question:

Can an agent do directory distribution faster than me, without losing traceability?

The workflow used Codex CLI for execution and a single source of truth tracker:

- one structured submission tracker (sheet or CSV)

The tracker logged every attempt with:

- directory

- URL

- status (

submitted,blocked,needs_verification) - notes

- last attempt timestamp

What We Ran

- 48-hour sprint

- 135 directory attempts

- agent-first execution, manual review on uncertain cases

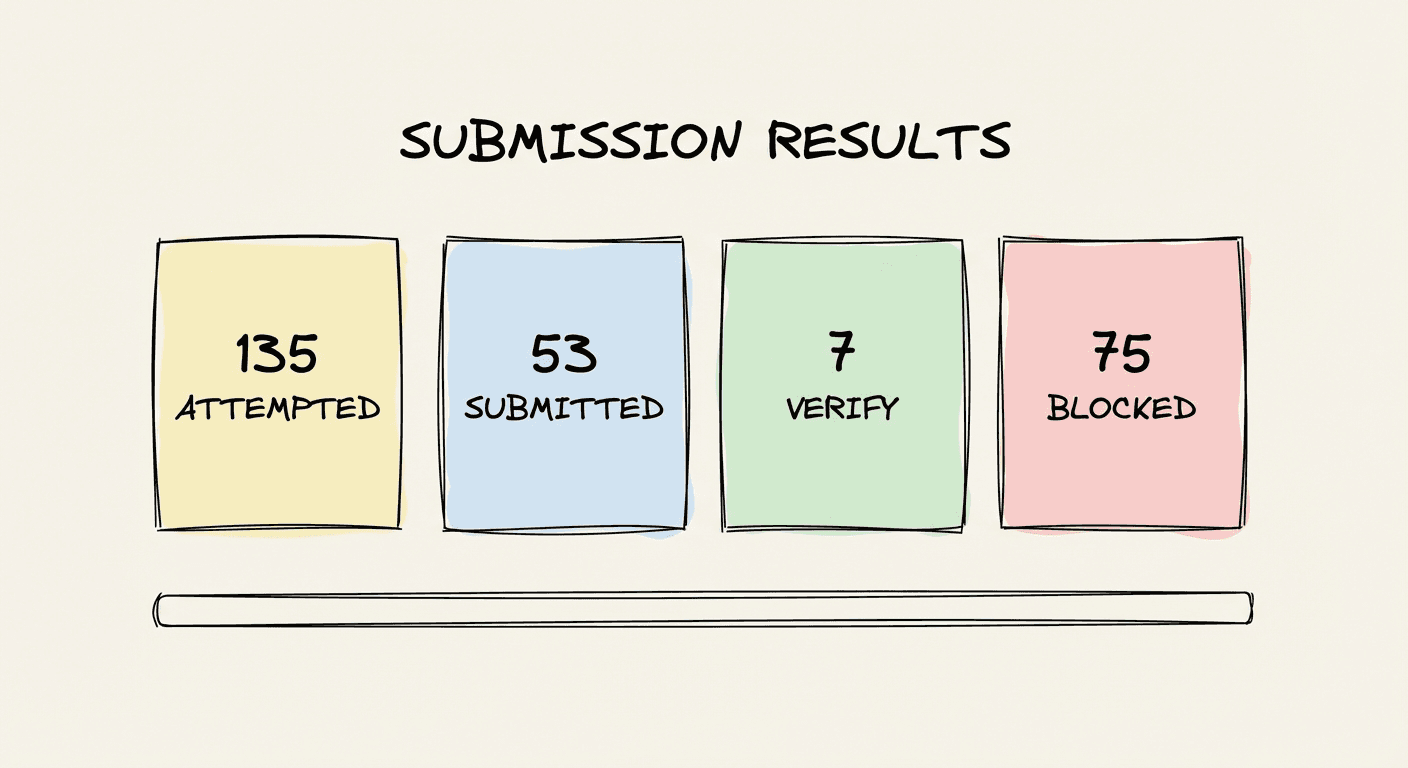

Results

- 135 attempted

- 53 submitted

- 7 needs verification

- 75 blocked

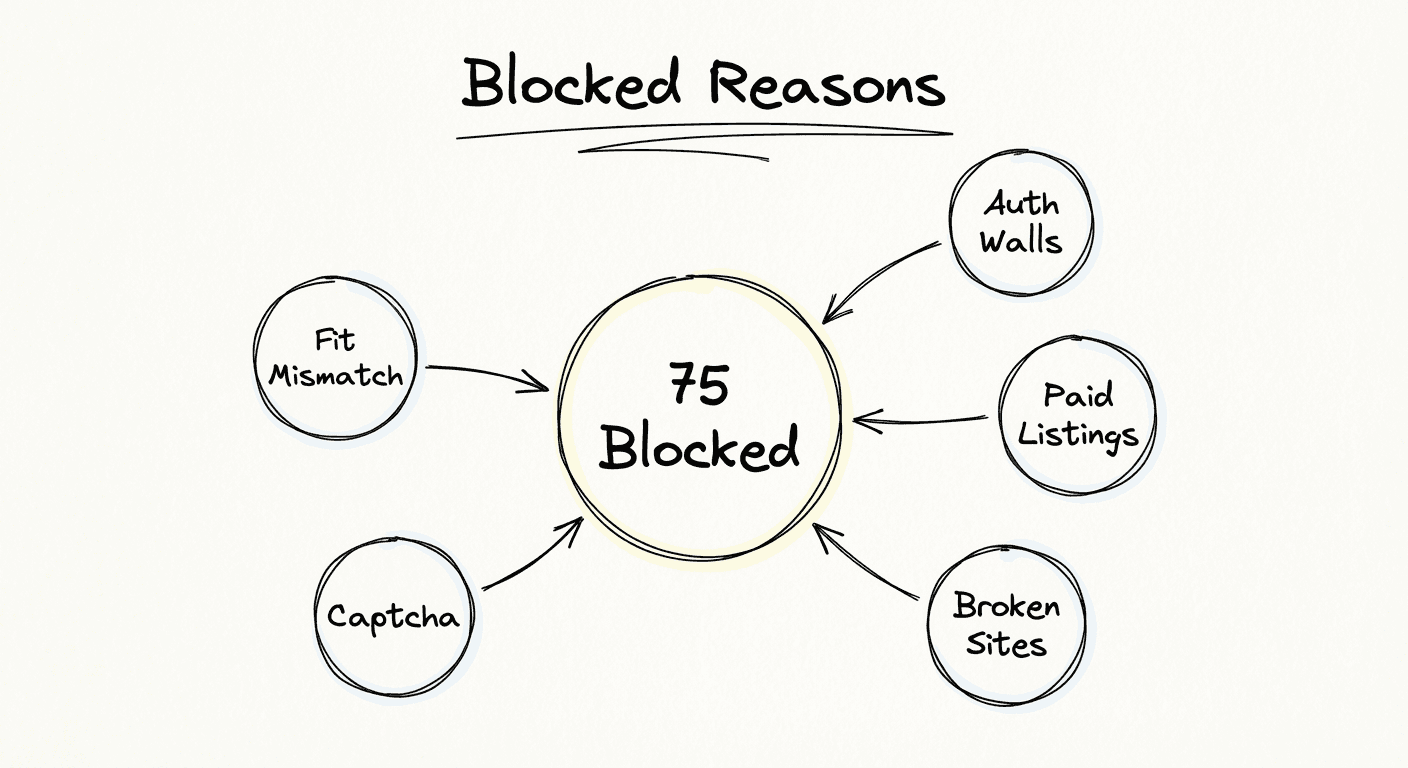

Why 75 Were Blocked

Mostly channel constraints, not agent failure:

- auth/login walls

- paid-only listing flows

- broken/dead forms

- captcha/anti-bot barriers

- category/policy mismatch

What Actually Worked

The agent was strong at:

- Repetitive execution at speed

- Consistent status logging

- Quickly eliminating dead-end directories

The tracker was the real asset. Without it, this would have been "busy work" with no learning.

What Did Not Change

Automation improved throughput, not demand quality.

An agent can submit forms. It cannot make a weak channel convert.

Directory listings are useful for baseline presence, but they are not our primary growth engine to $10k MRR.

What We Changed After the Sprint

We now treat directory distribution as:

- one-time coverage + light maintenance

- not a core weekly growth focus

Founder time moved to:

- high-intent communities

- conversion-focused pages

- trial-to-paid and churn improvements

If You Want to Copy This

Keep it simple:

- Use an agent for repetitive submission work.

- Track every attempt in one structured tracker.

- Cap time spent on directories.

- Evaluate channels on paid outcomes, not activity.

The logic (so anyone can run this)

- Prepare a directory list and a structured tracker.

- Give Codex CLI one explicit prompt:

- process the full list end-to-end

- write status + notes + timestamp for every attempt

- keep going until every directory has a final status

- Let Codex run until completion (no manual batching).

- After completion, review only

needs_verificationitems manually. - Count totals by status and decide channel allocation from that data.

- Keep directory work as maintenance, not your primary growth engine.

Bottom Line

This sprint was valuable because it removed uncertainty fast.

Using Codex CLI + a structured tracker, we turned a vague distribution task into concrete data:

- what to keep

- what to stop

- where founder time should actually go